In this part of the blog series about moving seamlessly between SAP HANA hosting types using storage the following data mobility scenarios are explored:

- Copy an SAP HANA Instance to the same or a different FlashArray using storage snapshots and Asynchronous replication

- Move an SAP HANA Instances persistent storage to a different FlashArray non-disruptively using ActiveCluster

- Failover an SAP HANA Instance with minimal disruption to a second site using ActiveDR

- Application consistency and crash consistency of the SAP HANA instance and how this impacts the method of migration.

The other posts in this series can be found at these locations :

SAP HANA Data Mobility Part 1 : Introduction

SAP HANA Data Mobility Part 2 : pHANA on-premises migration techniques

SAP HANA Data Mobility Part 3 : pHANA to vHANA and vHANA to pHANA

SAP HANA Data Mobility Part 4 : Hybrid Cloud

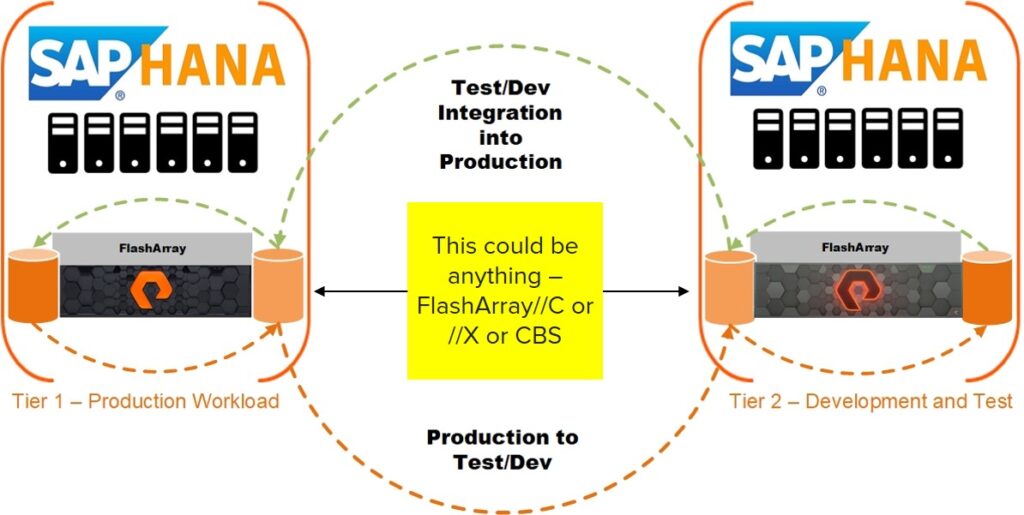

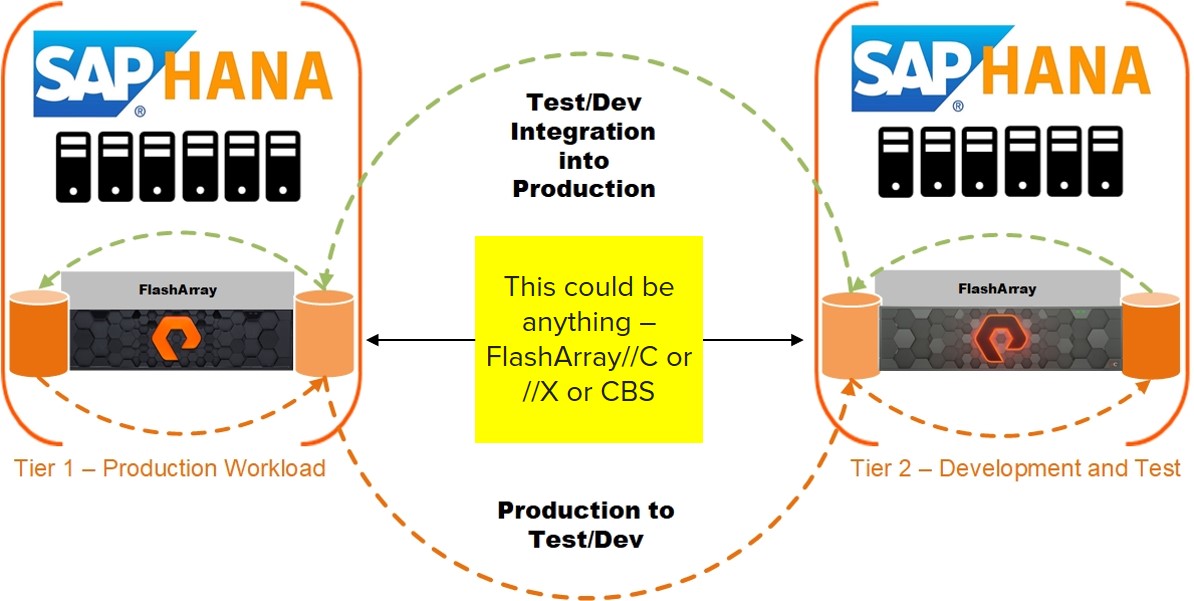

There are a number of scenarios which could require migration of an SAP HANA database deployed on bare-metal hardware at a number of levels. Different techniques to perform the migration could apply to one or more use cases.

The deployment topology of the SAP HANA instance only affects the number of volumes copied or replicated and how the recovery process occurs.

Copying storage snapshots to replicate the instance to different servers or storage

Before a storage snapshot can be copied to a new volume and the SAP HANA instance recovered , it must first be created in one of two ways :

Application consistency – SAP HANA allows for the ability to create “Data Snapshots” which place the instance and all of its tenant databases into the correct state for a storage snapshot to be taken and recovered from. For more information on this process please see this knowledge article on Pure Technical Services. Using this method only requires the SAP HANA Data Volume(s) to be copied.

Crash Consistency – No interaction with the SAP HANA database is necessary , but both the log and data volumes must be a part of a Protection Group.The storage snapshot is created for the Protection Group as opposed to on individual volumes.

For the purpose of this blog post I will use the FlashArray command line interface and a Protection Group called “SAP-HANA-PG for all examples. Each example can be performed using the graphical user interface ,REST API or SDK’s.

Use Case 1 : Copying the SAP HANA Instance to a new server but continue to use the same FlashArray storage

To list the protection groups and all of its volumes execute the following :

purepgroup list

To create the storage snapshot of the protection group all that needs to be executed is :

purepgroup snap --suffix <suffix> <volume name>

Once the protection group snapshot has been created each member will have a snapshot with the naming convention “ProtectionGroup.Suffix.SourceVolume”. The list of all snapshots on the array can be seen using the following command :

purevol list --snap

At the point where the snapshot is present on the array it can now be copied to a new volume using the command :

Purevol copy <snapshot name> <new volume name>

There is now a new volume called “SAP-HANA-Data-Copy” which can be connected to the new SAP HANA server. This process can be done for any volumes on the array.

Use Case 2 : Copying the SAP HANA Instance to a new server and different FlashArray

This will follow a similar process to use case 1 but the volume snapshot(s) will be replicated before being restored.

A prerequisite of the ability to replicate the storage snapshot is that both source and target arrays are connected using Async Replication. To do this – on the target array run the following command :

purearray list --connection-key

Once the connection key for the target array has been found both arrays can be connected using async-replication. To connect both arrays execute the following on the source array :

Purearray connect --management-address <target array> --type async-replication --connection-key

To replicate storage snapshots to different arrays or to a public cloud using Purity CloudSnap a replication target needs to be added to the protection group. This can be done using the following command :

purepgroup setattr --targetlist <Target name> <Protection group name>

At this point a storage snapshot can be created for the protection group and replicated to the target array.

purepgroup snap --suffix <suffx name> --replicate-now <Protection group name>

The replication process can be monitored from the target array using the following command :

purepgroup monitor<source array name>:<protection group name> --replication

Once replication is completed all of the storage snapshots which have been replicated can seen using the following command :

purepgroup list <source array>:<protection group name>

At the point where snapshots can be seen on the target they can be copied to new volumes and connected to the relevant hosts.

Migrating an SAP HANA instance to different storage non-disruptively

Using Purity ActiveCluster as a data mobility solution allows for volumes to be migrated to another FlashArray non-disruptively. This assumes that the host(s) which the SAP HANA instance is deployed on have access to the target and source storage simultaneously.

Use case : Migrate the SAP HANA instance from one FlashArray to another without interrupting instance availability

This use case should only be implemented in scenarios where the round trip latency between source and target arrays is less than 5ms.

A prerequisite of the ability to replicate the storage snapshot is that both source and target arrays are connected using Sync Replication. To do this – on the target array run the following command :

purearray list --connection-key

Once the connection key for the target array has been found both arrays can be connected using sync-replication. To connect both arrays execute the following on the source array :

Purearray connect --management-address <target array> --type sync-replication --connection-key

Once all of the sync-replication connectivity between the two arrays is established then we need to create a POD. , in any examples the POD name will be “SAP-HANA-POD”.

To create a POD use the following command on the source array:

purepod create <POD name>

Once the POD has been created the volumes to migrate non-disruptively need to be moved into it. This can be done using the following command :

purevol move <volume names> <POD name>

To replicate the volumes to the target array just add the array to the POD using the following command :

purepod add --array <target array> <POD name>

Once the array has been added as a target data movement will begin by resyncing the arrays to ensure both have the most up to date data. To view the status of which array has the most up to date data execute the following command :

Purepod list <POD name>

Once the volume has been replicated and is available on source and target arrays the status for both will be online.

At this point the host must be connected to the volumes from the target array. To do this execute the following command on the target array for each volume :

purehost connect --vol <Volume Name> <Host>

The operating system (in this case SLES for SAP) needs to be informed that there are new paths for the storage devices. These new paths will be mapped onto the existing devices as the synchronously replicated volumes will retain the same serial numbers.

To rescan the storage devices use the “rescan-scsi-bus.sh” utility with the -a argument on the SAP HANA host, the important output to note is the addition of “xx new or changed device(s) found” :

rescan-scsi-bus.sh -a

Scanning SCSI subsystem for new devices Scanning host 0 for SCSI target IDs 0 1 2 3 4 5 6 7, all LUNs Scanning for device 0 1 6 0 ... ………. ………. 16 new or changed device(s) found. [2:0:10:1] [2:0:10:2] [2:0:11:1] [2:0:11:2] [2:0:8:1] [2:0:8:2] [2:0:9:1] [2:0:9:2] [6:0:10:1] [6:0:10:2] [6:0:11:1] [6:0:11:2] [6:0:12:1] [6:0:12:2] [6:0:13:1] [6:0:13:2] 0 remapped or resized device(s) found. 0 device(s) removed.

When all new device paths have been discovered the host connection from the source array can be disconnected. To do this execute the following command on the source array for each volume :

purehost disconnect --vol <Volume Name> <Host>

When the volumes are disconnected from the host , the paths for the multipath-device(s) will be shown as faulty as per the below :

3624a93701b16eddfb96a4c38000142d9 dm-6 PURE,FlashArray size=1.0T features='0' hwhandler='1 alua' wp=rw `-+- policy='queue-length 0' prio=50 status=enabled |- 2:0:2:1 sde 8:64 failed faulty running |- 2:0:3:1 sdg 8:96 failed faulty running |- 11:0:1:1 sdbo 68:32 failed faulty running |- 11:0:0:1 sdbm 68:0 failed faulty running |- 2:0:8:1 sdbu 68:128 active ready running |- 2:0:9:1 sdbw 68:160 active ready running |- 2:0:10:1 sdby 68:192 active ready running |- 2:0:11:1 sdca 68:224 active ready running |- 6:0:10:1 sdcc 69:0 active ready running |- 6:0:11:1 sdce 69:32 active ready running |- 6:0:12:1 sdcg 69:64 active ready running `- 6:0:13:1 sdci 69:96 active ready running

To remove all of the failed paths just run the rescan-scsi-bus.sh utility on the host operating system with the -r argument to remove all failed paths :

rescan-scsi-bus.sh -r

Syncing file systems Scanning SCSI subsystem for new devices and remove devices that have disappeared Scanning host 0 for SCSI target IDs 0 1 2 3 4 5 6 7, all LUNs ……… ……… sg74 changed: INQUIRY failed . REM: Host: scsi11 Channel: 00 Id: 03 Lun: 02 DEL: Vendor: PURE Model: FlashArray Rev: 8888 Type: Direct-Access ANSI SCSI revision: 06 0 new or changed device(s) found. 0 remapped or resized device(s) found. 64 device(s) removed. [2:0:2:1] [2:0:2:2] [2:0:3:1] [2:0:3:2] [2:0:4:1] [2:0:4:2] [2:0:5:1] [2:0:5:2] [4:0:0:1] [4:0:0:2] ……..

The final step to completing the migration is to remove the source array from the POD, moving the volumes out of the POD on the target array. Optionally the POD can be deleted and the sync-replication relationship between the arrays, removed.

To remove the source array from the POD :

purepod remove --array <source array> <POD name>

To move the volumes out of the POD :

purevol move <volume list> ""

To delete the POD once the volumes have all been removed :

purepod destroy <POD name>

Failing over an SAP HANA system to a second site using storage replication

Purity ActiveDR continuously replicates data between two FlashArrays. This is near-synchronous replication which can be used at any distance between the source and target arrays. The failover process using the replication technique is minimally disruptive to the SAP HANA instance.

This topic is covered extensively in the blog post : Disaster Recovery and Planned Migration for SAP with ActiveDR.

Further information on the process of failing over an SAP HANA instance can be found in the SAP HANA Host failover with ActiveCluster and ActiveDR knowledge article.

In the next part of this series , Part 3 :pHANA to vHANA and vHANA to pHANA , the concepts discussed in this blog post will be enhanced upon by showcasing how they can be used to move between physical and virtual platforms.